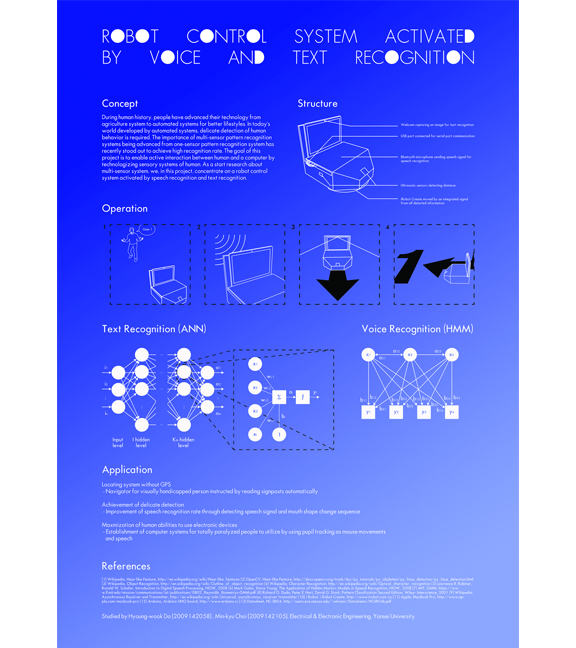

In order to bridge physical and digital world, it is important that a computer can communicate with the real world. This inspiration led our team to be interested in how a computer can perceive the information of its surrounding. Our team decided to bulid a robot system activated and controlled by speech recognition and text image recognition.

Our team implemented this system using MacBook Pro (late 2011), iRobot Roomba, and an Arduino board connected with three ultrasonic sensors on the front and both sides of the robot's body.

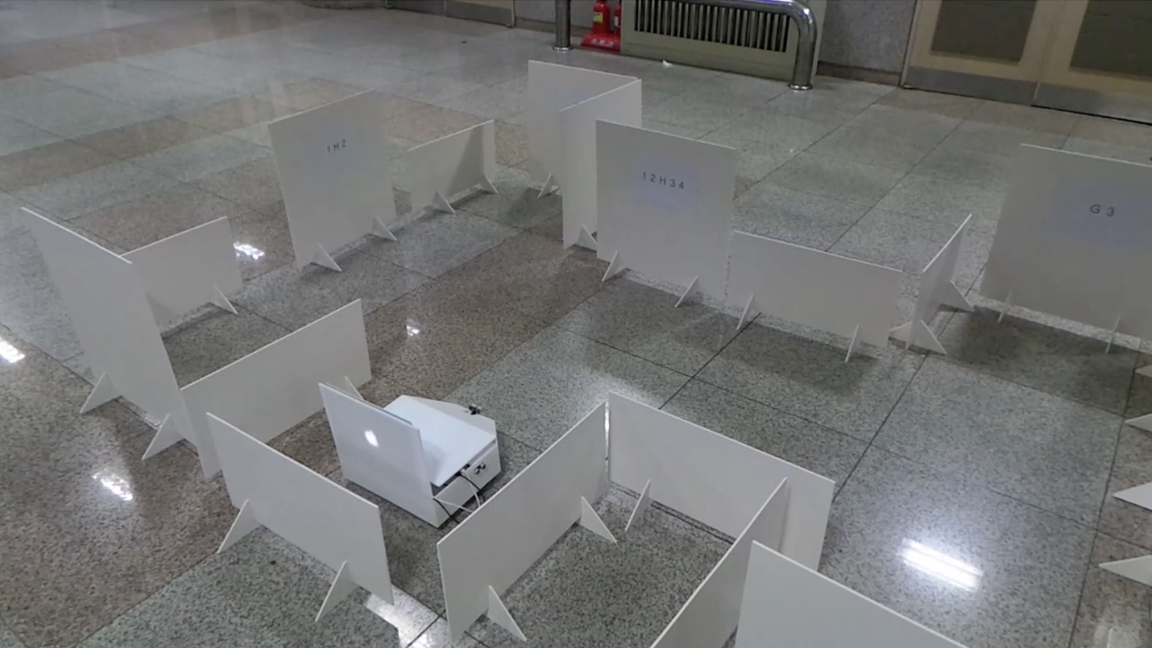

After we achieved the implementation, we made its cover with sheets of acrylic panel and let it run in a more complex maze which has signposts on every corner.

Our team's project had been selected as a represententative project of Yonsei EE Dept. and had an opportunity to demonstrate our work at E2FESTA (Capstone Project Expo) 2014 in Korea.

Designed and Implemented by Minkyu Choi and Youngwook Do